The power consumption of data centers is an issue that is attracting a great deal of interest as our dependence on technology increases. With Artificial Intelligence driving an unprecedented surge in electricity demand—projected to more than quadruple by 2030—data centers now face mounting pressure to balance computational needs with carbon emissions reduction.

Cloud computing and the power-consumption profile of data centers

Cloud computing has revolutionized data storage and processing by enabling users to access computing resources via the Internet. However, this technological advancement comes with a high energy cost. U.S. data centers consumed approximately 176 TWh in 2023, representing 4.4% of total U.S. electricity consumption, with projections showing this could double or triple to 325-580 TWh by 2028.

Major cloud providers like Amazon Web Services operate massive data center networks that require substantial power resources, with hyperscale facilities demanding upwards of 100 megawatts each—equivalent to powering 400,000 electric vehicles annually.

| Activity | Typical Power Draw | Cooling Load Share | Carbon Emissions |

|---|---|---|---|

| Video streaming | 200-400W per server | 40-45% | High (24/7 operation) |

| Online gaming | 150-300W per server | 35-40% | Medium-High |

| AI/ML processing | 600W+ per server | 50-55% | Very High |

| Blockchain/Crypto | 400-600W per server | 45-50% | Extremely High |

The demand from data centers is placing unprecedented strain on utilities, with power density requirements evolving dramatically. Traditional data centers operate at 5-10 kW per rack, while AI-optimized facilities now require 60+ kW per rack within the same square foot footprint.

Electricity is the bedrock on which data centers are built. Their energy consumption is mainly due to three factors:

- Servers running non-stop: Servers, which are the heart of data centers, must be operational 24/7 to ensure access to stored data. This uninterrupted availability requires constant energy use.

- Equipment cooling: To prevent overheating and maintain server performance, cooling systems are crucial. These systems, which often use air-conditioning technology, consume much power.

- Security systems: Data centers must be protected from physical and digital intrusion. Security systems, such as surveillance cameras and firewalls, also consume energy.

It should be noted that the energy impact of data centers is also linked to their size. The larger the data center, the higher its energy consumption per square foot. Optimizing electricity use through advanced data center management techniques can significantly reduce energy costs and improve operational efficiency.

With the exponential increase in data generation and cloud computing demand, global data center capacity is expanding at an unprecedented rate. According to Goldman Sachs Research, power demand from data centers is projected to increase by 50% by 2027 and by as much as 165% by 2030 compared to 2023 levels. This dramatic growth is primarily driven by AI technologies, cloud services, and the increasing digitalization of business operations.

How much electricity do data centERs consume worldwide?

According to the International Energy Agency, data centers currently consume between 2% and 3% of the world's electricity. In 2023, this infrastructure consumed approximately 176 terawatt-hours (TWh), marking a significant increase from previous years.

Some countries face disproportionate impacts. In Ireland, data centers could account for 30% of electricity consumption by 2028. France, with its 264 data centers, consumes around 8.5 TWh annually, representing 2% of its total national consumption.

The collective power needs of global data centers could soon rival entire countries like Japan, highlighting the colossal energy challenge posed by this industry.

What is the annual consumption of data centERs worldwide?

The annual consumption of data centers worldwide continues to grow at an alarming pace. By 2030, forecasts suggest consumption could reach up to 13% of global power consumption, primarily due to increasing demand for digital services and exponential data growth.

Hyperscale data centers, which are massive facilities often covering hundreds of acres, are experiencing even faster growth rates. According to recent industry metrics, approximately 137 new hyperscale data centers came online in 2024 alone, with projections indicating capacity growth of at least 20% annually through 2030.

This massive consumption underlines the critical need for environmentally sustainable technologies for optimal operation.

What will the worldwide consumption of data centERs be in TWh in 2025?

Current projections for 2025 indicate global data center consumption between 600 TWh and 1,050 TWh, depending on various scenarios. According to the U.S. Department of Energy, consumption is expected to reach between 325 to 580 TWh by 2028 in the United States alone.

This variability depends primarily on the development of energy efficiency technologies and management practices. An estimate of 800 TWh appears plausible in the middle of the spectrum, representing a substantial increase from the 460 TWh consumed in 2022.

Significant efforts in improving energy efficiency and integrating renewable energy sources will be essential to manage this growth responsibly.

How much power will a data center use in 2030?

By 2030, the International Energy Agency projects that global data center electricity demand will reach approximately 945 TWh, slightly more than Japan's entire electricity consumption today. Goldman Sachs forecasts that AI will be the primary driver, with AI-optimized data centers' power needs quadrupling by 2030. This growth will require an estimated $720 billion in grid infrastructure investments to support the increased data center capacity.

Internet giants and data centers: the case of Google

Google, a major player in data center power consumption

Google is a key player in the field of data centers, with electricity consumption reaching 30.8 million megawatt-hours in 2024—more than double its 2020 levels. In the United States, which hosts approximately 36% of global data center capacity, power consumption by data centers is projected to account for almost half of the country's electricity demand growth through 2030. While Google's consumption is massive, it maintains competitive efficiency compared to Amazon Web Services, with both companies racing to meet escalating AI computing power needs that are straining the power grid.

Despite this substantial energy use, Google has achieved remarkable progress in optimizing efficiency. Their data centers maintain an industry-leading Power Usage Effectiveness (PUE) of 1.09 as of 2025, meaning they use about 84% less overhead energy than the industry average. Google has also reached 66% carbon-free energy across its data center operations, reducing carbon emissions despite growing energy demands.

Google’s efforts to reduce power consumption

To minimize its power consumption, Google focuses on several areas. One of the main strategies is the optimization of its data centers. In particular, they use DeepMind, a technology that combines machine learning and artificial neural networks. This AI-powered system has reduced cooling energy requirements by approximately 30% and continues to find novel efficiency improvements that surprise even data center operators. Google has also redesigned its servers to be more energy-efficient, using highly efficient power supplies and installing batteries directly on machines, enabling them to build some of the most high-performance servers in the sector.

As AI energy costs continue to escalate, with inference operations now consuming 80-90% of AI computing resources, these efficiency innovations become increasingly critical.

How can we reduce the energy impact of data centers?

To reduce their energy impact, data centers can adopt "green initiatives" such as improving energy efficiency, integrating renewable energy sources, and implementing innovative cooling practices. By 2030-2035, data centers could account for up to 20% of global electricity use, making such initiatives essential for sustainability.

Efforts to improve energy and resource management, such as server virtualization, which enables several virtual systems to run on a single physical server, can also help to reduce energy consumption significantly. Other promising approaches include heat recycling, smart energy management systems, and the adoption of nuclear and geothermal energy sources to diversify power supplies beyond traditional renewables.

Key strategies for greener, power-efficient data centers

The drive toward sustainable data center operations has intensified as electricity consumption continues to rise. According to Lawrence Berkeley National Laboratory's 2024 report, data center electricity demand is projected to double or triple by 2028, making green initiatives no longer optional but essential for both environmental and economic reasons.

Energy-efficiency measures

- Smart energy management: Advanced real-time analytics platforms now monitor ESG performance across energy usage, carbon output, and resource efficiency. These systems incorporate predictive modeling, allowing operators to test the impact of sustainability initiatives before deployment, reducing risk while ensuring measurable environmental benefits.

- Environmental certifications: Data centers increasingly pursue certifications like LEED or Energy Star to demonstrate their commitment to sustainable practices. These certifications establish industry standards while providing access to ESG-linked financing and green bonds, creating financial incentives for environmental leadership.

- Transitioning from natural gas: While natural gas has been positioned as a "bridge fuel," forward-thinking operators are accelerating the shift to fully renewable sources. This transition reduces carbon emissions while future-proofing operations against increasingly stringent environmental regulations.

- Virtualization and server consolidation: By running multiple virtual systems on single physical servers, data centers significantly reduce their hardware footprint. This strategy not only decreases energy consumption but also minimizes space requirements and associated operational costs.

Circular-economy practices

- Heat recycling systems: Recovering waste heat from data centers to warm nearby offices and residential spaces transforms an operational byproduct into a valuable resource. This approach minimizes external energy demands for local communities while creating mutually beneficial relationships with surrounding areas.

- Recycled water utilization: Implementing closed-loop cooling systems that use recycled water reduces freshwater consumption by up to 80% in some facilities. This practice promotes sustainable water resource management while decreasing operational expenses.

- Community engagement initiatives: Transparent reporting of environmental efforts builds trust with local communities and stakeholders. Publishing sustainability metrics and participating in local environmental programs improves corporate image while fostering positive community relations.

These initiatives not only benefit the environment but also significantly reduce operational costs, demonstrating that sustainable practices and economic profitability can successfully coexist in the data center industry.

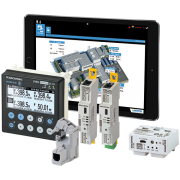

Our solutions for reducing data center power consumption without compromising power availability

Socomec is committed to supporting data centers in their energy transition by providing them with various data center power efficiency solutions. As the power sector faces unprecedented demands with new data center construction expected to double electricity consumption by 2028, optimizing energy use has become mission-critical.

Our technologies directly impact key industry metrics like Power Usage Effectiveness (PUE), helping facilities achieve the industry-leading values of 1.2 or below that characterize today's most efficient operations. Through strategic data center electrification and power management, Socomec solutions deliver measurable improvements:

- High-efficiency UPS: Any energy loss counts. By using UPS with optimum efficiency, losses are largely minimized. The Smart Conversion Mode available for the MODULYS modular UPS enables losses to be cut by a factor of 5 and an efficiency of 99.1% to be achieved without compromising the quality of the power supply.

- Energy monitoring systems: The DIRIS Digiware multi-start electrical energy monitoring system has an accuracy class 0.5 and exclusive technologies to detect inefficiencies and reduce operating costs. This scalable system monitors electrical power consumption, quality, and residual currents regardless of the distribution method (PDU or busway).

- Energy storage systems: Energy storage systems such as SUNSYS HES L enable better integration of renewable energy sources, such as solar or wind power, by storing excess energy produced for later use. In the event of failure, storage systems can also reduce the need to use diesel generators, helping to cut carbon emissions and fuel costs.

AI-driven data centers and rising energy demand

Why does AI need data centers?

Artificial Intelligence requires massive computing power to process complex algorithms and vast datasets. Data centers provide the essential infrastructure for AI operations, offering the high-performance computing capabilities needed for training and inference workloads. Modern AI models demand 10 times more resources than traditional cloud applications, requiring specialized facilities with sufficient power, cooling, and redundancy to handle these intensive workloads.

How much energy does AI use?

AI's energy appetite is substantial and growing rapidly. According to Deloitte, data centers will consume approximately 536 TWh of electricity in 2025, representing about 2% of global electricity consumption. This figure could double to 1,065 TWh by 2030 as AI computing power requirements continue to escalate. Training a single large AI model can consume energy equivalent to hundreds of households over several months, while even routine inference operations significantly increase power demands compared to traditional computing tasks.

AI energy problem: will power demand outstrip supply?

The surging power requirements for AI computing present a critical challenge for energy infrastructure. McKinsey research indicates that AI data centers could consume 11-12% of the United States' total electricity by 2030, potentially creating supply deficits in many regions. This unprecedented growth is outpacing grid capacity expansion, with aging power infrastructure struggling to meet these new demands. The race to secure reliable power for AI operations is driving data center investments toward energy-abundant locations and spurring innovations in power efficiency and alternative energy solutions.

Water usage in data centers: cooling challenges and solutions

How much water do AI data centers use?

AI data centers consume staggering amounts of water primarily for cooling their heat-generating server equipment. According to recent studies from Stanford University and Lawrence Berkeley National Laboratory, data centers in the United States consumed approximately 17.5 billion gallons of water in 2023, representing about 0.3% of the total public water supply. The scale varies dramatically by facility size—a typical large data center can consume up to 5 million gallons of water daily, equivalent to the water needs of a town with 10,000 to 50,000 residents. Google's global data center operations alone used approximately 5.2 billion gallons annually, while Meta's facilities consumed 663 million gallons, with the average Google data center requiring about 550,000 gallons daily.

Data center water usage and environmental impact

The environmental footprint of data center water consumption extends far beyond simple volume metrics. Data centers typically evaporate about 80% of the water they draw, compared to just 10% for residential usage, making them particularly intensive on local water resources. This has created significant challenges for utilities and communities, especially in water-stressed regions where approximately two-thirds of new data centers have been built since 2022. In Phoenix alone, nearly 60 data centers collectively consume about 177 million gallons of water daily. To mitigate these impacts, forward-thinking operators are implementing solutions mentioned earlier in our discussion of green initiatives, including recycled-water systems where data centers partner with local utilities to use treated sewage instead of drinking water, and adiabatic cooling technologies that significantly reduce overall water requirements.

Calculating and benchmarking data center power consumption

How to calculate data center power consumption?

Data center power consumption can be calculated using the formula: Total Power (kW) = Server Power (W) × Number of Servers ÷ 1,000. This provides the baseline IT power requirements. For comprehensive metrics, measure both IT equipment power and facility overhead (cooling, lighting, security systems). Advanced monitoring systems like DIRIS Digiware can track these metrics in real-time, providing accurate consumption data for optimization decisions.

Power Usage Effectiveness (PUE) and other metrics

Power Usage Effectiveness (PUE), standardized under ISO/IEC 30134-2:2016, is the most widely used efficiency metric calculated by dividing total facility energy by IT equipment energy. A perfect PUE of 1.0 indicates 100% efficiency. Beyond PUE, modern data centers track additional metrics like IT Equipment Utilization Effectiveness (ITUE), Cooling Efficiency Ratio (CER), and cabinet-level power density (kW/rack) to comprehensively evaluate performance.

- Hyperscale data centers: Leading facilities achieve PUE ratings of 1.09-1.20, with Google reporting a fleet-wide 1.09 PUE in 2025

- Enterprise data centers: Typically operate between 1.5-1.8 PUE, with newer facilities trending toward 1.4

- Colocation facilities: Average PUE ranges from 1.3-1.6, depending on facility age and cooling technology

- Edge computing sites: Smaller installations typically range from 1.5-2.0 PUE due to cooling constraints and lower economies of scale

Contact us

FAQ: data center power usage

Where are AI data centers located?

AI data centers are strategically positioned across North America, Europe, and Asia, with major hubs in the U.S., particularly Texas and Northern Virginia. Companies like Google and Microsoft maintain global networks of facilities, with some newer installations specifically optimized for AI workloads in over 25 cities worldwide.

How much energy do AI data centers use?

AI data centers are driving unprecedented energy consumption, with global data center electricity demand projected to double to 945 TWh by 2030. AI processing is particularly power-intensive—creating a single AI image uses energy equivalent to fully charging a smartphone, while data centers could represent 8.6% of US electricity demand by 2035.

North America datacenter shortage: what's driving it?

North America is experiencing a critical data center shortage with vacancy rates hitting a record low of 2.6% in 2025. This crisis stems from explosive AI computing demand, power grid limitations, and concentrated development in key markets. Companies now face 24-month waiting periods for capacity, with an estimated $1 trillion in development needed between 2025-2030.

Data center statistics worth knowing

The global data center market is projected to reach $527.46 billion by the end of 2025, with approximately 10 GW of new capacity breaking ground globally this year. North America's hyperscale market alone will hit $138 billion, growing at 22% CAGR through 2030, while Meta, Alphabet, and Amazon are investing $65-100 billion each in AI facilities.